TU Grade

AI-Powered Automatic Short Answer Grading with Explanatory Feedback

Overview

The Problem

Manual grading of short answer questions is time-consuming, subjective, and often lacks timely, detailed feedback for students. Educators struggle to provide individualized responses at scale, while students receive delayed or minimal explanations that hinder their learning progress. Additionally, training educational AI models faces significant data scarcity challenges.

The Solution

TU Grade is an AI-powered grading assistant that automatically evaluates student responses and provides detailed, individualized feedback. Built on advanced large language models and integrated seamlessly into Moodle, it serves as a second opinion for educators while delivering immediate, constructive feedback to students. The system is based on Leon Camus's Master's thesis and has been in production at TU Darmstadt since 2024.

Key Features

Automatic Scoring

0-9 point scale with AI-generated justifications for each grade, providing transparent and consistent evaluation.

Individual Feedback

Personalized, explanatory responses for every student submission, helping learners understand their mistakes.

RAG-Powered References

Retrieval-Augmented Generation dynamically retrieves relevant reference answers for context-aware grading.

Configurable Rubrics

Customizable grading parameters per exercise, allowing educators to define specific evaluation criteria.

High-Performance Backend

Rust API with vLLM inference engine delivers low-latency responses for real-time grading feedback.

Moodle Integration

Custom question type with seamless LMS integration, working within your existing Moodle infrastructure.

How It Works

TU Grade uses a modern, high-performance architecture designed for efficient AI inference and seamless Moodle integration:

Backend System

- Rust-based API for high performance and reliability

- vLLM for optimized model inference

- PostgreSQL database for data persistence

- RAG (Retrieval-Augmented Generation) for reference answers

- Token-based authentication per exercise

- Optimized for low-latency grading responses

Moodle Plugin

- PHP-based question type plugin

- Derived from short answer question type

- Configuration interface for educators

- Student answer input (text field or area)

- Review interface with AI feedback display

- Seamless LMS integration

User Interface

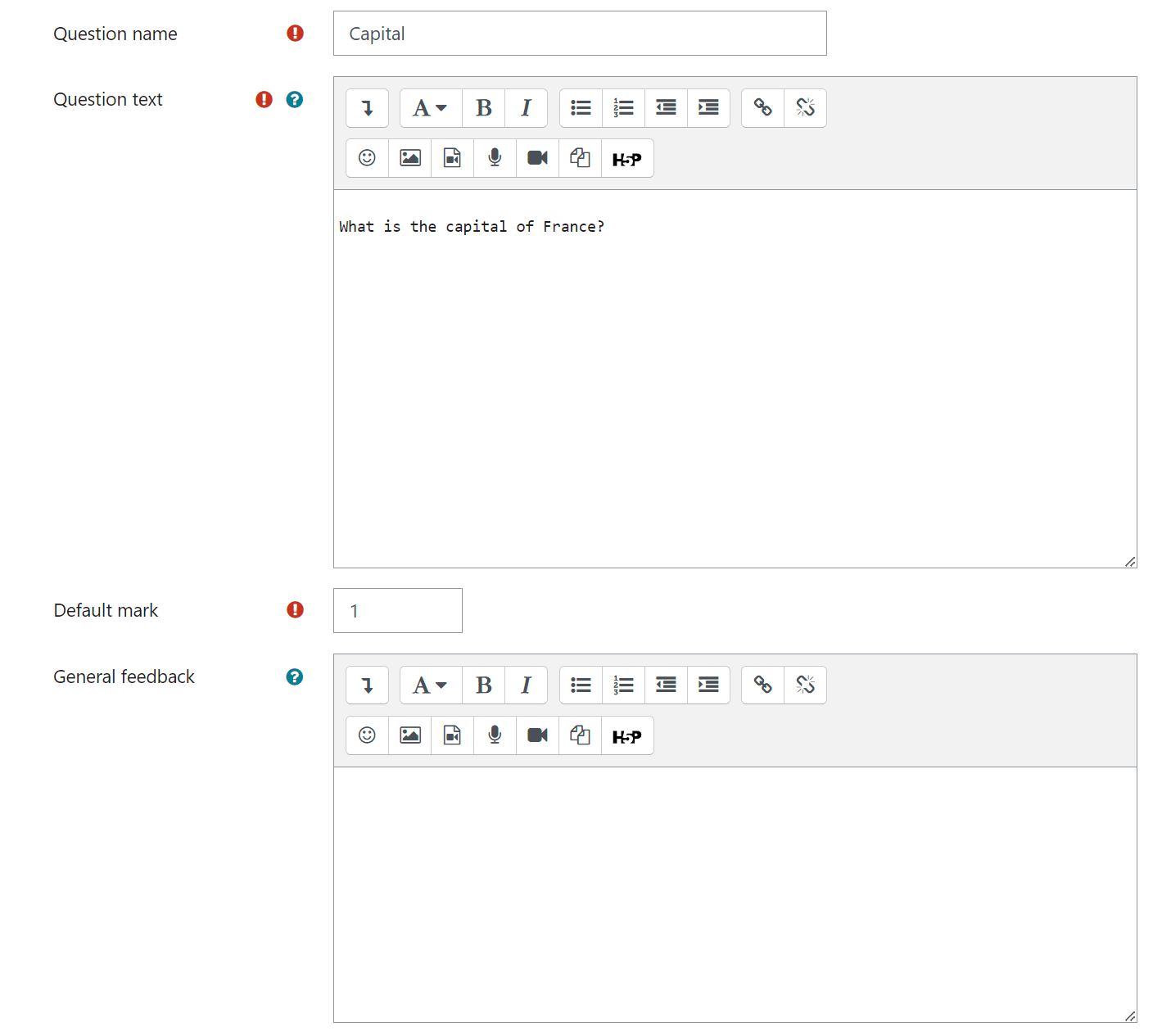

Exercise Configuration

The configuration interface allows educators to set up questions, define reference answers, and configure grading parameters:

Question text editor with rich text formatting options for the student-facing question.

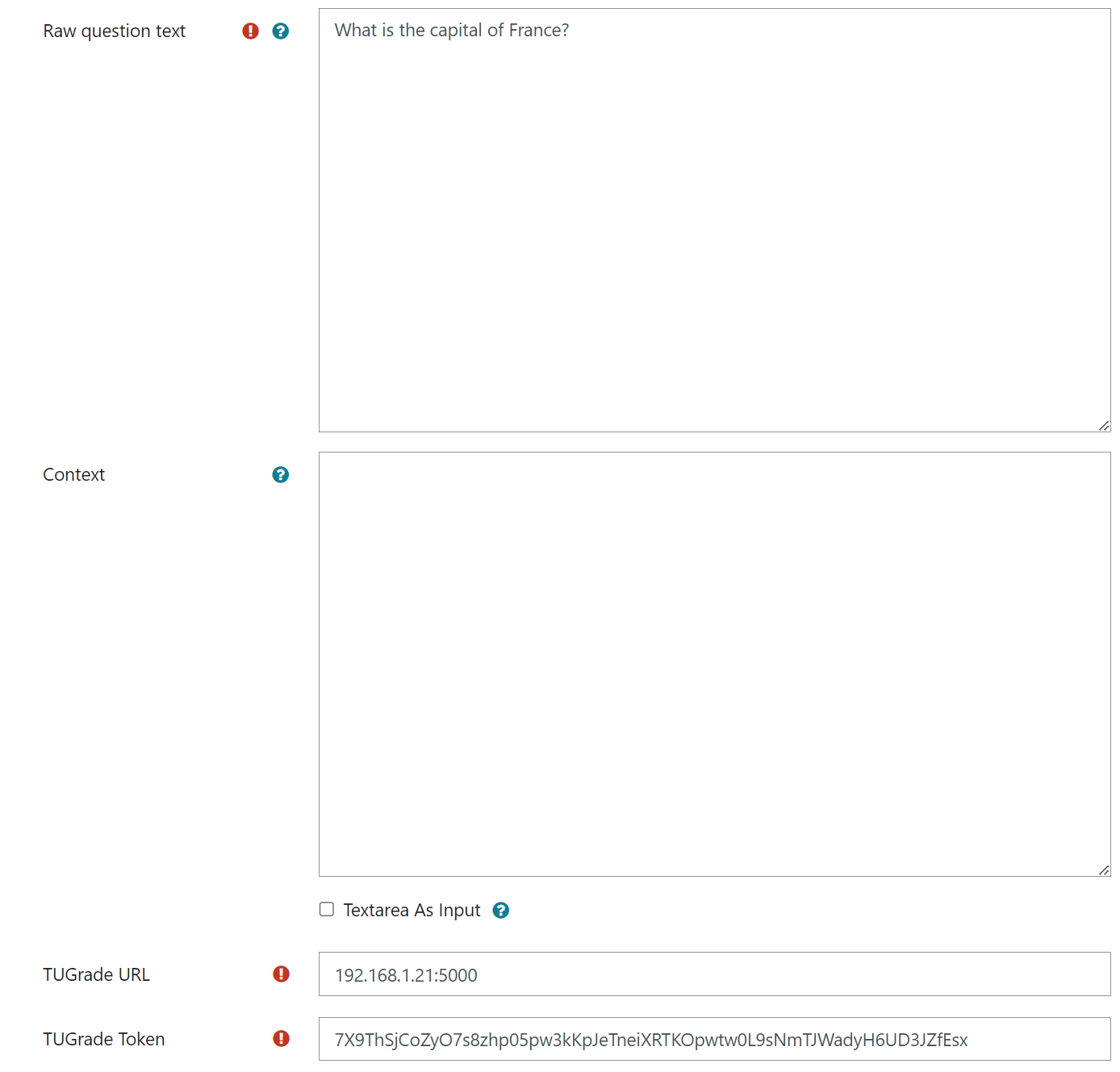

Raw question text for AI processing, context field, TUGrade URL and token authentication settings.

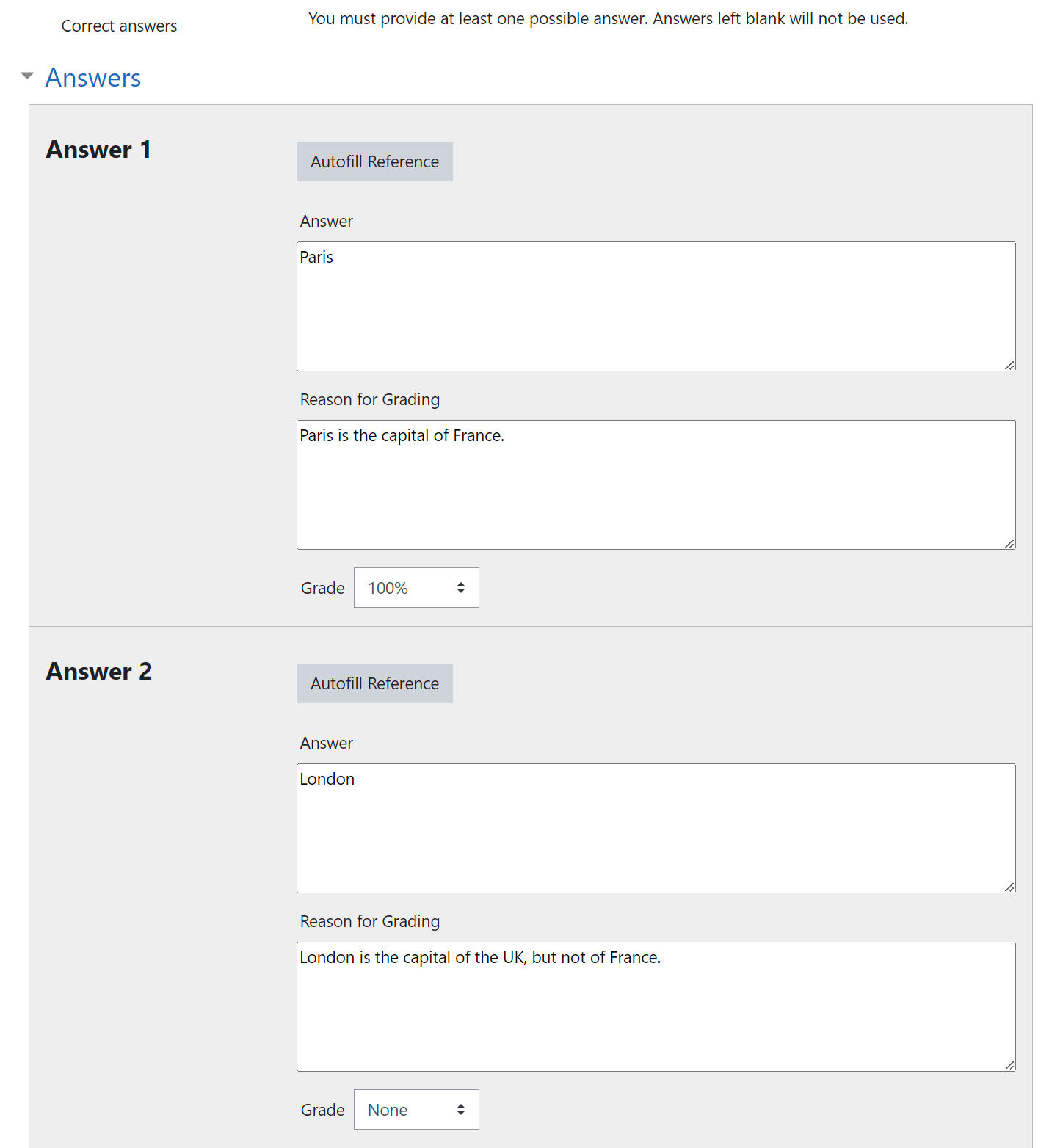

Reference answer configuration with grading scale, reason for grading, and autofill reference button.

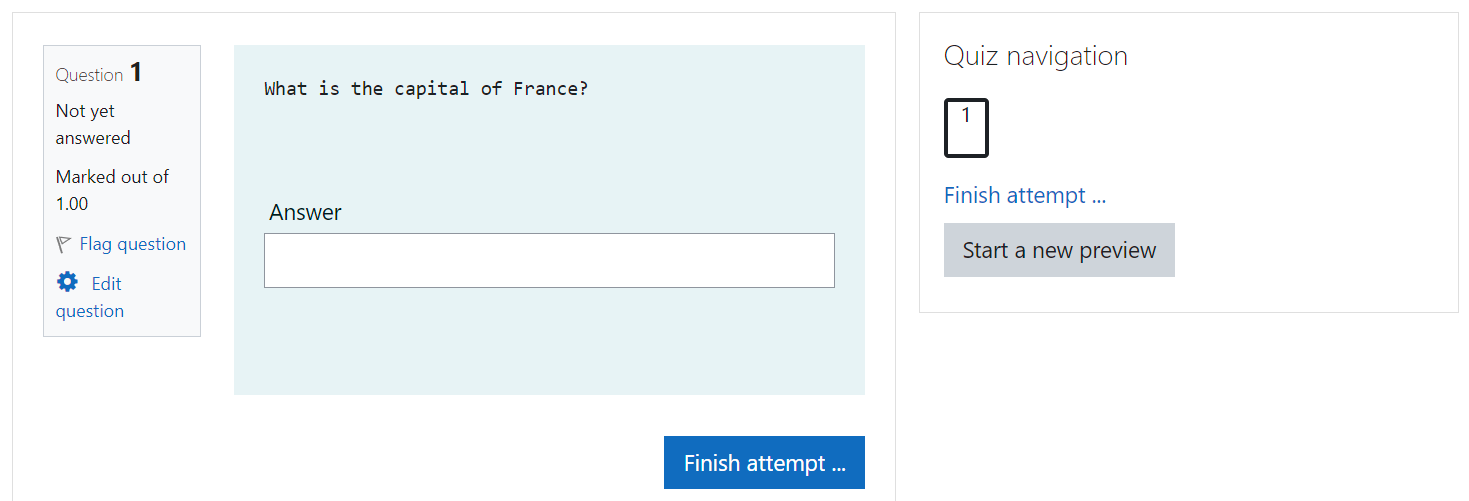

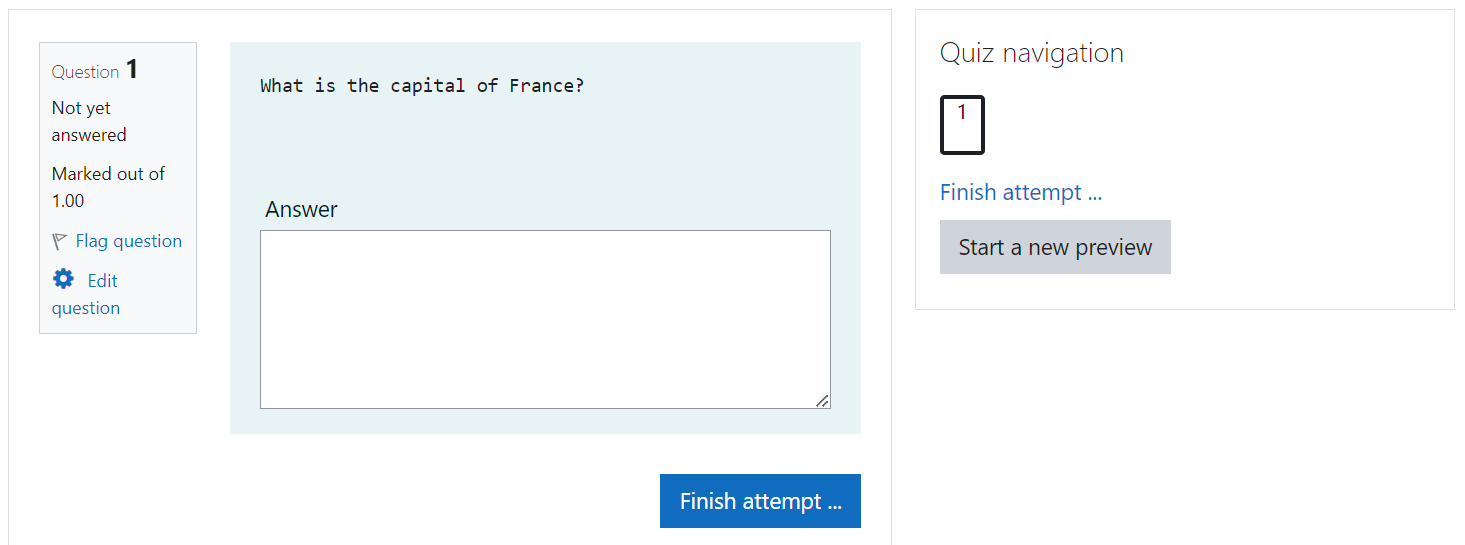

Student Input

Students can submit their answers using either a compact text field or an expanded text area:

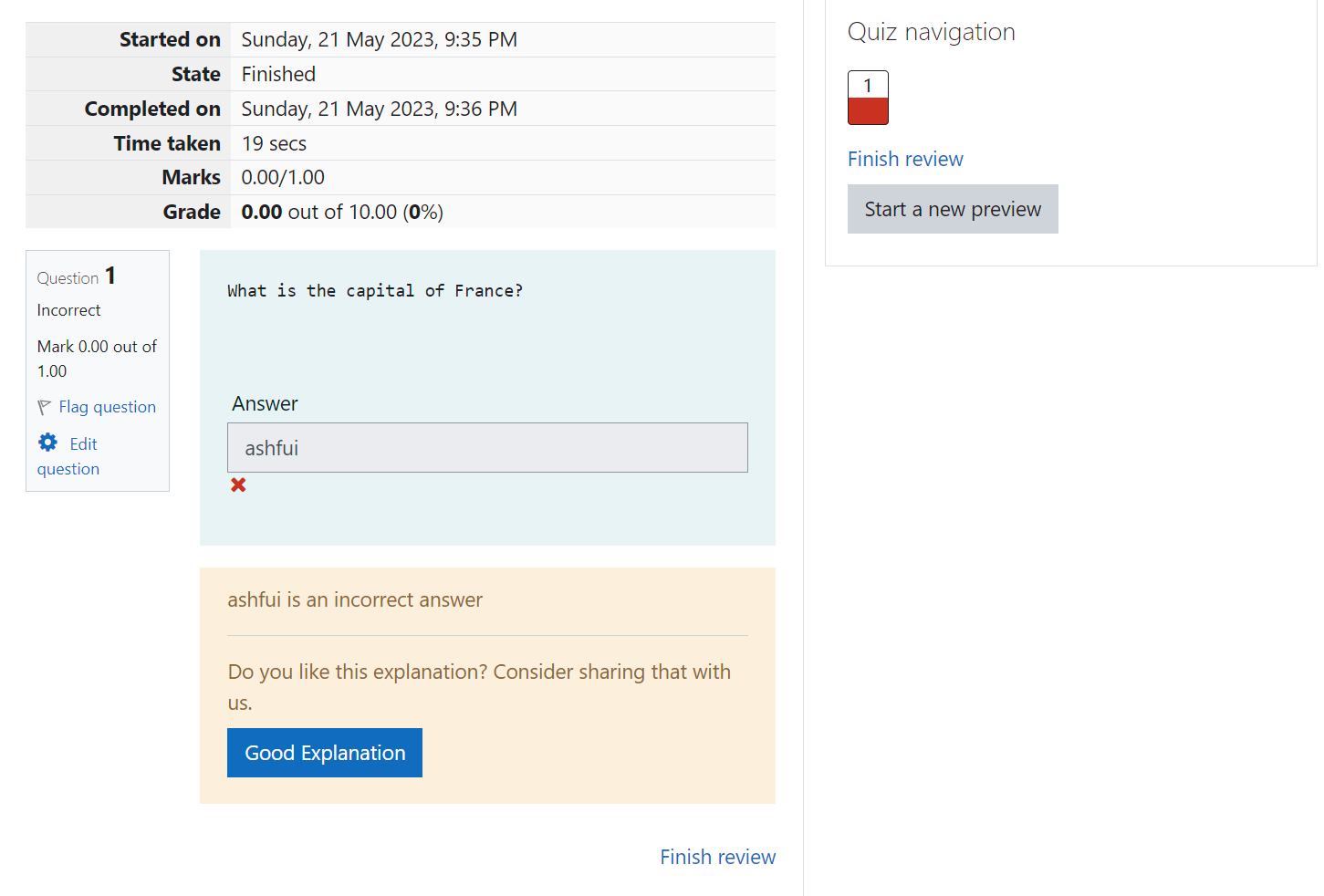

Evaluation & Feedback

After submission, students receive immediate feedback with AI-generated explanations and grades:

Technical Details

Model

- Vicuna 7B v1.5 (Llama 2 based)

- Instruction-tuned for grading tasks

- GPTQ 4-bit quantization

- Only 3GB VRAM for model weights

- Greedy sampling (deterministic)

- KV-caching for fast inference

Fine-tuning

- LoRA adaptation (r=16)

- 350 training steps

- SAF dataset (Filighera et al., 2022)

- Discrete regression (0-9 scale)

- English and German support

- Zero-shot capable

Infrastructure

- vLLM for optimized inference throughput

- Rust API for high-performance reliable serving

- PostgreSQL for persistent storage

- RAG for context-aware reference answers

- Token-based authentication

- 2x NVIDIA 2080ti GPUs (~24GB VRAM)

Research Background

This project is based on Leon Camus's Master's thesis "TU Grade - To Grade using large language models" and related research on Transformers for automatic short answer grading. The system addresses data scarcity through zero-shot capabilities and introduces an explanatory grading approach that goes beyond simple scores.

Key Research Findings

- Llama 2 significantly outperforms Llama 1 in grading tasks

- Instruction-tuned models excel at zero-shot grading without fine-tuning

- Model-generated explanations are semantically closer to gold standards than human responses

- Fine-tuning has marginal impact on semantic quality but improves syntactic alignment

- Discrete regression (0-9 scale) shows limitations for capturing nuanced response quality

- KV-caching enables efficient real-time inference

Current Status

TU Grade was deployed at TU Darmstadt as an experimental research system to evaluate AI-powered grading in real-world educational settings. The system processed student submissions and provided automated feedback during the evaluation period.

Evaluation Period (2024-2025)

- Experimental deployment at TU Darmstadt

- Institutional use for research purposes

- Evaluated AI grading effectiveness in practice

- Moodle plugin integrated with test courses

Project Status

- Research project completed (2025)

- Tool phased out in late 2025

- Replaced by newer solutions

- Contact for research collaboration

For inquiries about collaboration, deployment, or research opportunities

Contact Leon Camus